How to Build a Strategic Prompt Research Process That Drives AI Visibility and Bottom-Funnel Conversions

AI search has changed how people discover, compare, and choose products. Traditional SEO still matters, but it no longer covers the full journey.

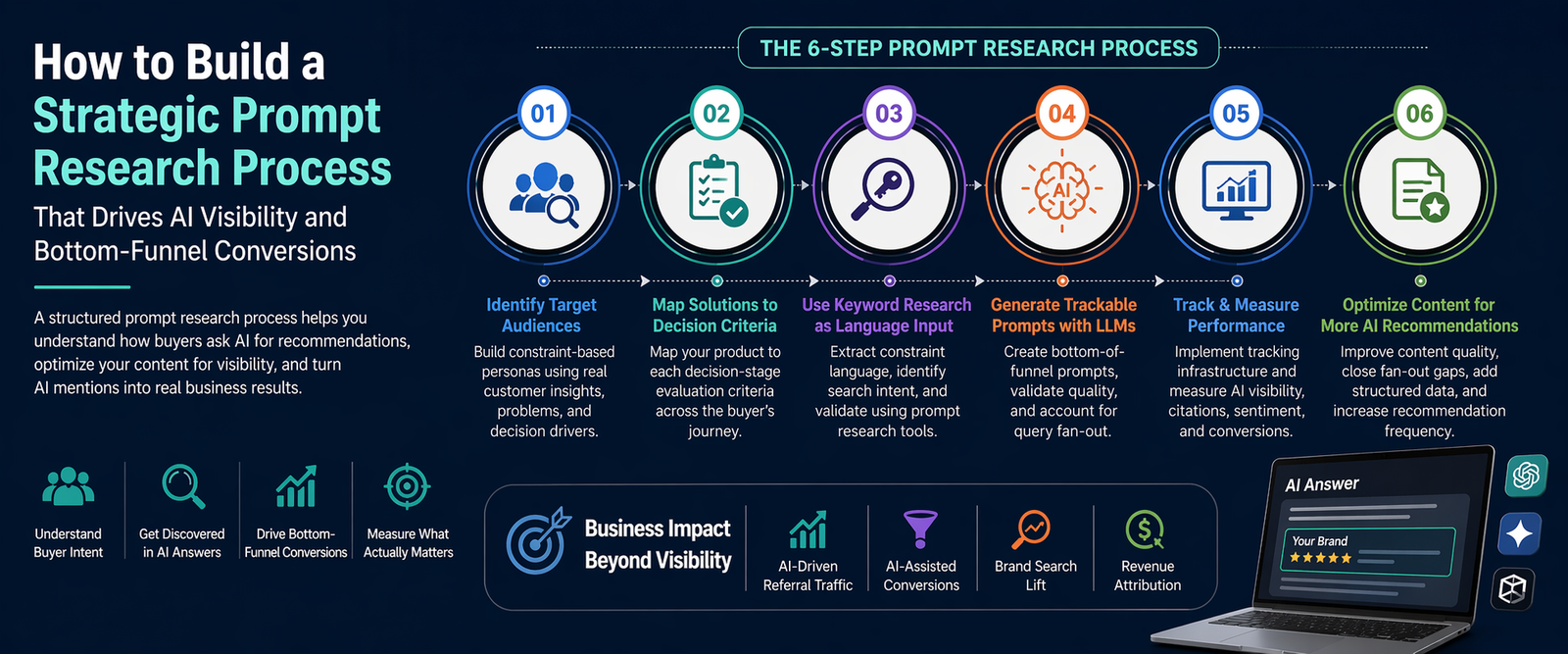

A strategic prompt research process helps you understand how buyers ask AI tools for recommendations, how AI systems compare solutions, and what content makes your brand more likely to appear in answer engines.

Google confirms that AI Mode and AI Overviews may use “query fan-out,” where one user question can trigger multiple related searches across subtopics and data sources. That means brands now need to optimize for the full decision context, not just one keyword at a time.

Understanding the Fundamental Differences Between Prompt Research and Keyword Research

Prompt research is not keyword research with longer sentences. It studies how users ask AI systems for help when they want a decision, shortlist, comparison, or recommendation.

Keyword research focuses on search demand. Prompt research focuses on recommendation behavior.

Data Availability and Historical Context

Keyword research has years of historical data behind it. Prompt research does not.

SEO tools can estimate search volume, keyword difficulty, CPC, SERP features, and ranking history. Prompt research usually starts with less stable data because AI platforms do not provide full public prompt volume, citation frequency, or recommendation logs.

That does not make prompt research weak. It means marketers must build their own evidence system.

A strong prompt research process combines:

- Keyword patterns

- Customer interviews

- Sales call notes

- Review mining

- Competitor comparison language

- AI response testing

- Referral and conversion tracking

This gives you a practical view of how real buyers describe their problems before they are ready to convert.

Response Volatility Versus Ranking Stability

Google rankings can move, but AI responses can change faster.

A page may rank in position three for months. An AI response may mention your brand today, ignore it tomorrow, and cite a competitor next week.

This happens because AI systems often generate answers from multiple sources, query variations, context signals, and model behavior. Google also says AI Mode and AI Overviews may use different models and techniques, so responses and links can vary.

That volatility changes the goal.

Instead of asking, “Do we rank for this keyword?” ask, “How often do we appear when buyers ask for help choosing a solution like ours?”

Intent Recognition and Recommendation Triggers

Prompt research is strongest at the bottom of the funnel.

People often use AI tools when they want a shortlist, comparison, buying guide, workflow, alternative, or recommendation. These prompts usually contain strong decision signals.

For example:

“What is the best project management software for a 20-person agency with client approval workflows?”

That prompt includes product category, team size, use case, constraint, and decision intent.

Your job is to identify these recommendation triggers and build content that directly answers them.

Step One: Identifying Target Audiences Through Constraint-Based Persona Development

Traditional personas often sound nice but fail in AI search. Prompt research needs constraint-based personas.

A constraint-based persona defines the buyer by what limits their decision.

Essential Persona Characteristics for Prompt Research

A useful prompt research persona should include the buyer’s situation, not just their job title.

For example, “Marketing Manager” is too broad. A better persona is:

“Marketing manager at a 15-person SaaS startup who needs an affordable analytics tool that integrates with HubSpot, supports attribution reporting, and does not require a full-time data analyst.”

This gives you prompt-ready language.

Important persona characteristics include:

- Role and responsibility

- Company size

- Budget level

- Technical skill

- Existing tools

- Buying urgency

- Compliance needs

- Integration requirements

- Main objections

- Success criteria

These details help you create prompts that sound like real buyer questions, not generic SEO queries.

Sources for Constraint Discovery

You can find constraints in places where customers speak naturally.

Sales calls, support tickets, reviews, Reddit threads, comparison pages, demos, onboarding forms, and lost-deal notes often reveal better language than keyword tools.

Look for phrases such as:

- “We need something that works with…”

- “Our biggest issue is…”

- “We switched because…”

- “Too expensive for…”

- “Hard to set up…”

- “Best option for a small team…”

These phrases become raw material for bottom-funnel prompts.

The best prompt research does not start with “What should we rank for?” It starts with “What would a qualified buyer ask before choosing?”

Step Two: Mapping Product Solutions to Decision-Stage Evaluation Criteria

AI visibility improves when your content maps clearly to how buyers compare options.

At the bottom of the funnel, people do not want vague benefits. They want proof, trade-offs, pricing logic, feature clarity, and fit.

The Five-Layer Solution Framework

Use a five-layer solution framework to connect your product to real decision criteria.

The five layers are:

- Problem layer: What pain does the buyer want to solve?

- Use-case layer: What job are they trying to complete?

- Constraint layer: What limits their choice?

- Comparison layer: What alternatives are they considering?

- Proof layer: What evidence makes the choice credible?

For example, a CRM company should not only write “best CRM software.” It should create content for prompts like:

“Best CRM for a small B2B sales team that needs email tracking, pipeline automation, and simple reporting.”

That prompt has a stronger buying signal than a broad keyword.

Validating Evaluation Criteria Through Competitive Analysis

Competitor analysis helps you understand what AI systems may already associate with your category.

Search your target prompts in ChatGPT, Gemini, Perplexity, Copilot, and Google AI Mode. Then document which brands appear, what criteria the AI uses, and which sources it cites.

Do not only record who gets mentioned.

Record why they get mentioned.

Look for recurring evaluation criteria such as:

- Ease of use

- Pricing

- Integrations

- Security

- Customer support

- Setup time

- Scalability

- Industry fit

- Reviews

- Free trial availability

These criteria should shape your content strategy, product pages, comparison pages, and FAQ sections.

Step Three: Leveraging Keyword Research as Language Input for Prompt Construction

Keyword research still matters. It gives you the language buyers already use.

The mistake is treating keywords as the final strategy. In prompt research, keywords become ingredients for more specific AI questions.

Extracting Constraint Language from Keyword Patterns

Start with your normal keyword list, then extract modifiers.

For example, from keyword research you may find patterns like:

- “best CRM for small business”

- “CRM with WhatsApp integration”

- “affordable CRM for startups”

- “HubSpot alternative for agencies”

- “CRM for real estate agents”

These modifiers reveal constraints.

Turn them into prompt components:

- Business type

- Price sensitivity

- Current tool

- Required integration

- Industry

- User skill level

- Desired outcome

Now you can create bottom-funnel prompts that match how buyers ask AI tools for help.

Identifying Search Intent Distribution

Not all keywords deserve prompt tracking.

Group your keywords by intent before turning them into prompts.

Use four simple buckets:

- Informational: “What is prompt research?”

- Commercial: “Best AI visibility tools”

- Comparative: “Tool A vs Tool B”

- Transactional: “AI visibility software pricing”

Prompt research should focus mostly on commercial, comparative, and transactional intent.

These are the moments when AI recommendations can influence revenue.

Validation Through Prompt Research Tools

Prompt research tools can speed up testing, but they should not replace judgment.

Use them to monitor brand mentions, source citations, competitor presence, and answer sentiment across AI platforms. Then manually check important prompts because AI answers can vary based on wording, timing, user context, and platform.

A practical validation process looks like this:

- Build 50 to 100 candidate prompts.

- Test each prompt across multiple AI platforms.

- Record brand visibility, citations, and sentiment.

- Identify missing content or weak proof.

- Update pages and retest monthly.

The goal is not a giant prompt spreadsheet. The goal is a reliable decision-intent map.

Step Four: Generating Trackable Bottom-of-Funnel Prompts Using Large Language Models

Large language models can help generate prompt ideas, but you need a strict template.

Without structure, the model will create fluffy prompts that sound clever but do not match buying behavior.

Constructing the Prompt Generation Template

Use a prompt generation template that includes your audience, product category, constraints, decision stage, and conversion goal.

Example template:

“Generate 25 bottom-funnel prompts a buyer might ask an AI assistant when comparing [product category]. The buyer is [persona]. They care about [constraints]. Include prompts for alternatives, comparisons, pricing, integrations, implementation, and risk reduction. Make each prompt specific enough to trigger a product recommendation.”

This template works because it forces the AI to think like a buyer with constraints.

Good prompt outputs may include:

- “What is the best [category] for [persona] with [integration need]?”

- “Which [category] works best for [team size] and [budget]?”

- “What are the top alternatives to [competitor] for [use case]?”

- “Compare [brand] and [competitor] for [specific workflow].”

Validating Prompt Quality Through Response Testing

A prompt is only useful if it produces decision-stage responses.

After generating prompts, test whether the AI answer includes product names, comparison criteria, buying advice, feature evaluation, or source links.

If the answer stays too educational, the prompt may sit too high in the funnel.

For example, this is too broad:

“How does CRM software work?”

This is better:

“What is the best CRM for a 10-person B2B sales team that needs fast setup, email tracking, and affordable monthly pricing?”

The second prompt has bottom-funnel intent because it asks for a recommendation under real constraints.

Accounting for Query Fan-Out in Prompt Set Design

Prompt sets should cover related subtopics, not just one exact phrasing.

Google says AI Mode can divide a question into subtopics and search across multiple data sources at the same time. Google also says Deep Search can use the same query fan-out approach at a larger scale, issuing hundreds of searches for deeper research tasks.

So your prompt set should include the main query and likely fan-out branches.

For example, one main prompt may be:

“Best ecommerce platform for a small fashion brand.”

Likely fan-out branches include:

- Pricing comparison

- Payment options

- Inventory management

- SEO features

- Shipping integrations

- Theme flexibility

- Customer support

- Migration from Shopify or WooCommerce

If your content does not answer these branches, AI systems may find better evidence elsewhere.

Step Five: Implementing Systematic Prompt Tracking and Performance Measurement

Prompt tracking turns AI visibility from a guessing game into a repeatable process.

You need a simple system that records where your brand appears, how it appears, and whether that visibility connects to business outcomes.

Establishing Tracking Infrastructure

Create a prompt tracking sheet or dashboard with these fields:

- Prompt

- Funnel stage

- Persona

- Platform tested

- Date tested

- Brand mentioned or not

- Brand position in answer

- Competitors mentioned

- Sources cited

- Sentiment

- Recommended action

- Related landing page

- Conversion page

Then connect this with analytics.

Google Analytics allows custom channel groups, and Google’s own documentation gives an example for creating an “AI assistants” channel using regex patterns for tools such as ChatGPT, Gemini, Copilot, Claude, and Perplexity.

That means you can separate AI assistant traffic from generic referral traffic and monitor it more clearly.

Determining Optimal Prompt Set Size

You do not need thousands of prompts at the start.

Most businesses should begin with 50 to 150 high-intent prompts. That is enough to find visibility gaps without creating tracking chaos.

Use this structure:

- 20 core category prompts

- 20 competitor alternative prompts

- 20 use-case prompts

- 20 industry-specific prompts

- 20 pricing or implementation prompts

- 10 to 50 extra prompts for priority personas

Review the prompt set every month. Remove prompts that do not trigger recommendations. Add prompts based on sales objections, AI referral landing pages, and competitor changes.

Interpreting AI Visibility Metrics

AI visibility is not only “mentioned or not mentioned.”

Track multiple metrics:

- Mention rate

- Citation rate

- Recommendation frequency

- Competitor overlap

- Sentiment quality

- Source quality

- Prompt-level conversion value

- Referral traffic from AI tools

- Assisted conversion impact

A brand mention with weak sentiment may not help. A citation on a high-intent comparison prompt may matter more than 20 vague mentions in educational answers.

Focus on visibility that can influence revenue.

Step Six: Optimizing Content for Enhanced AI Recommendation Frequency

AI recommendation frequency improves when your content gives clear, evidence-backed, structured answers.

Do not write only for AI systems. Write for buyers first, then structure your content so AI systems can understand and cite it.

Content Optimization Strategies Based on Princeton Research

A Princeton University publication on Generative Engine Optimization introduced GEO-bench and reported that GEO methods could improve visibility in generative engine responses by up to 40%. The research also notes that results vary by domain, which means one-size-fits-all AI optimization does not work.

The practical lesson is simple: add substance.

Strong AI-ready content should include:

- Clear claims

- Real statistics

- Expert quotes

- Comparison tables

- Step-by-step frameworks

- Source citations

- Specific use cases

- Product limitations

- Updated information

Generic content says, “Our platform saves time.”

Better content says, “Our platform reduces manual reporting by connecting CRM, ad platform, and revenue data in one dashboard, which helps small teams avoid spreadsheet-based reporting.”

Specificity gives AI systems better material to summarize.

Addressing Query Fan-Out Gaps

A query fan-out gap happens when your page answers the main question but misses the related questions.

For example, a page about “best accounting software for freelancers” may explain features but ignore tax reports, invoice templates, payment gateways, mobile app quality, and pricing tiers.

That creates gaps.

To fix them, add self-contained sections that answer:

- Who is this best for?

- Who should avoid it?

- What does it integrate with?

- How much does it cost?

- How long does setup take?

- What are the main alternatives?

- What proof supports the claim?

This helps both human buyers and AI systems.

Structured Data Implementation for Enhanced AI Understanding

Structured data does not guarantee AI recommendations, but it helps machines understand your content more reliably.

Google says structured data helps it understand page content and can make product information appear in richer ways in Search, Google Images, and Google Lens. Product markup can support details like price, availability, review ratings, shipping information, and return policy data.

For bottom-funnel pages, consider relevant schema such as:

- Product

- SoftwareApplication

- FAQPage

- Review

- AggregateRating

- Organization

- BreadcrumbList

- HowTo

- Dataset, when publishing proprietary data

For ecommerce and SaaS, keep product data accurate. Google Merchant Center says product data helps match products to the right queries, and inaccurate or missing data can cause display issues or disapprovals.

Measuring Business Impact Beyond Visibility Metrics

AI visibility only matters if it supports business growth.

Mentions are useful, but conversions pay the bills. Funny how finance teams prefer revenue over “vibes.”

Tracking AI-Driven Referral Traffic

Start by building a separate AI traffic view in GA4.

Track sources such as ChatGPT, Perplexity, Gemini, Claude, Copilot, and other AI assistants. Use source/medium reports, referral data, landing pages, and custom channel groups.

Look at:

- Sessions

- Engaged sessions

- Landing pages

- Conversions

- Assisted conversions

- Demo requests

- Trial signups

- Revenue per AI channel

This helps you see which AI platforms send traffic and which pages convert.

Attribution Modeling for AI-Assisted Conversions

AI often assists the decision before the final click.

A buyer may ask ChatGPT for options, visit your comparison page, search your brand on Google, read reviews, and then convert through direct traffic.

That means last-click attribution may undervalue AI visibility.

Use a broader attribution view:

- AI referral as first touch

- Brand search lift as middle touch

- Direct or paid search as final touch

- CRM source notes from sales calls

- “How did you hear about us?” form fields

Prompt research works best when SEO, analytics, sales, and CRM data talk to each other.

Monitoring Brand Search Volume Trends

Brand search volume can reveal hidden AI influence.

If your brand appears more often in AI recommendations, some users may not click the AI citation. They may search your brand later.

Track trends for:

- Brand name

- Brand + reviews

- Brand + pricing

- Brand + alternatives

- Brand vs competitor

- Brand + use case

A rising trend in brand-modified searches can show growing market awareness, even when direct AI referral traffic looks small.

Strategic Considerations for Resource Allocation and Prioritization

Prompt research should guide smarter investment, not create another endless SEO task list.

The best strategy focuses on high-value decision prompts first.

Prioritizing High-Value Prompts Over Volume Maximization

Do not chase every possible prompt.

Prioritize prompts based on revenue potential, sales relevance, competitive gap, and conversion intent.

A prompt like “best enterprise payroll software for 500 employees with multi-country compliance” may be more valuable than 100 broad prompts about “what is payroll software.”

Score each prompt from 1 to 5 for:

- Buyer intent

- Product fit

- Revenue potential

- Competition level

- Current visibility

- Content gap size

Then optimize the prompts with the highest total score.

Balancing AI Optimization with Traditional SEO Investment

AI optimization should not replace SEO.

Google says the same foundational SEO best practices still apply for AI features, including technical eligibility, Search policies, and helpful, reliable, people-first content.

That means your foundation still matters:

- Crawlability

- Indexability

- Page speed

- Internal links

- Helpful content

- Topic authority

- Clear page structure

- Accurate metadata

- Strong UX

Think of prompt research as an advanced layer on top of SEO, not a replacement.

Building Cross-Functional Alignment Around AI Visibility

AI visibility is not only a content team problem.

Product, sales, customer success, SEO, paid media, and analytics should all contribute.

Sales teams know objections. Product teams know technical strengths. Customer success knows real use cases. SEO knows search demand. Analytics knows what converts.

Bring them together once a month and review:

- Which prompts mention us?

- Which prompts mention competitors?

- What objections appear in AI answers?

- Which pages need stronger proof?

- Which content gaps affect sales?

This makes AI visibility a business strategy, not just an SEO experiment.

Advanced Tactics for Competitive Differentiation

Once the basics work, build assets competitors cannot copy quickly.

AI systems prefer useful, evidence-rich sources. Proprietary assets make your brand harder to ignore.

Developing Proprietary Data Assets

Original data gives AI systems something unique to cite.

Examples include:

- Industry benchmark reports

- Customer survey insights

- Pricing comparison studies

- Performance benchmarks

- Use-case adoption reports

- Trend reports

- Internal platform data, anonymized and aggregated

Google’s helpful content guidance asks whether content provides original information, reporting, research, or analysis. That aligns well with proprietary data assets.

If your competitors only rewrite the same listicles, original data can separate your brand fast.

Creating AI-Optimized Content Formats

AI systems can understand many content types, but clear structure helps.

Create formats that answer decision prompts directly:

- “Best for” comparison tables

- Alternative pages

- Migration guides

- Use-case landing pages

- Pricing explainers

- Implementation checklists

- Buyer guides

- Decision matrices

- Pros and cons sections

Do not hide the answer until paragraph 12. AI and humans both appreciate directness.

Start with the answer, then explain.

Building Authoritative Presence in AI Training Data Sources

AI visibility grows when your brand appears across trusted web sources.

Build presence in places that buyers and AI systems may use for evidence:

- Review platforms

- Industry publications

- Partner pages

- Documentation hubs

- Comparison websites

- Community discussions

- Research reports

- Case studies

- Product directories

This is not about spammy link building. It is about making your brand visible in the places where real evaluation happens.

Ethical Considerations and Long-Term Sustainability

Prompt research should improve accuracy, not manipulate AI answers.

The safest long-term strategy is to become genuinely easier to verify, compare, and recommend.

Maintaining Factual Accuracy in All Claims

Every claim should be true, specific, and supportable.

Do not invent statistics, fake customer numbers, fake reviews, or fake awards. AI systems may repeat bad information, and users may make real buying decisions from it.

Google’s AI content guidance says success in Search depends on original, high-quality, people-first content that demonstrates E-E-A-T, no matter how the content was produced. It also states that appropriate use of AI is not against guidelines when it is not used mainly to manipulate rankings.

Accuracy is not optional. It is the trust layer.

Respecting User Privacy in Persona Development

Use customer data carefully.

Prompt research does not require personal or sensitive details. You can analyze patterns without exposing private information.

Use anonymized and aggregated data from sales calls, forms, CRM notes, and support tickets. Remove names, emails, company secrets, health details, financial identifiers, and anything that could identify a person without consent.

Good persona development studies constraints. It does not exploit private data.

Avoiding Manipulative Optimization Tactics

Do not create misleading pages just to influence AI systems.

Avoid tactics like fake expert quotes, hidden content, review manipulation, doorway pages, or mass-produced comparison pages with no real analysis.

These tactics may create short-term visibility, but they damage trust and can create long-term search risk.

A better approach is simple: answer the buyer’s real question better than anyone else.

Preparing for Increased AI Platform Transparency

AI platforms will likely become more transparent over time.

Brands should prepare by keeping clean records of content changes, prompt tests, source citations, and performance data.

Document:

- What prompts you track

- Which pages support each prompt

- What changes you made

- When you tested again

- What visibility changed

- What conversions followed

This gives your team a defensible process instead of random AI experiments.

Emerging Trends Shaping the Future of Prompt Research

Prompt research will keep changing as AI search becomes more visual, personalized, and platform-specific.

The brands that win will treat prompt research as an ongoing intelligence system.

Multimodal Search Integration

AI search is no longer text-only.

Google’s AI Mode support documentation says users can ask questions with text, voice, or images, and Google’s India AI Mode announcement also highlights voice and image-based interaction.

This affects ecommerce, local SEO, travel, fashion, home services, education, and any category where images help users decide.

Future prompt research should include visual prompts such as:

- “What product is this?”

- “Find alternatives that look like this.”

- “Which brand makes this style?”

- “Compare this screenshot with better tools.”

Visual content, image SEO, product feeds, and structured data will matter more.

Platform-Specific Recommendation Algorithms

Each AI platform behaves differently.

ChatGPT, Gemini, Perplexity, Copilot, and Google AI Mode may use different retrieval systems, browsing behavior, source preferences, and answer formats.

So do not test only one platform.

Build platform-specific benchmarks:

- Which platforms mention your brand?

- Which cite your website?

- Which prefer third-party sources?

- Which summarize reviews?

- Which recommend competitors more often?

This helps you decide where to invest content, PR, partnerships, and structured data work.

Increased Emphasis on Product Structured Data

Product data will become more important as AI tools answer shopping and comparison prompts.

Google says product structured data can help users see price, availability, reviews, shipping, and more in search results. Google Merchant Center also explains that structured data helps Google and other platforms read product data from HTML reliably.

For product-led brands, this means your product pages should be machine-readable and buyer-friendly.

Keep these details updated:

- Product name

- Price

- Availability

- Reviews

- Ratings

- Shipping

- Returns

- Images

- Variants

- Brand

- GTIN or identifiers, where relevant

Bad data creates bad visibility.

Personalization and User Context Factors

AI answers may become more personalized.

A user’s location, previous questions, device, task, language, and preferences may shape recommendations. That makes broad content less effective.

Brands need content for specific contexts:

- Best for beginners

- Best for agencies

- Best for enterprise teams

- Best for local businesses

- Best under a budget

- Best for privacy-sensitive teams

- Best for fast setup

- Best for migration from a competitor

Prompt research should model these contexts before competitors do.

Conclusion: Prompt Research Is the New Bottom-Funnel SEO Layer

Prompt research helps you understand how buyers ask AI systems for recommendations, comparisons, and decision support.

Keyword research tells you what people search. Prompt research tells you how they ask AI to choose.

To build a strong process, start with constraint-based personas, map your product to decision criteria, convert keyword patterns into prompt sets, track AI visibility across platforms, optimize content with evidence, and connect results to conversions.

The winning brands will not chase every AI mention. They will focus on prompts that influence real buying decisions.